How to Write an SLP Evaluation Report

A well-written evaluation report does more than document test scores. It tells the story of a client's communication profile, connects formal and informal findings to real-life function, and provides the clinical rationale that drives eligibility decisions, insurance authorizations, and treatment planning. Whether you are a graduate student drafting your first report or a working clinician refining your documentation skills, a consistent structure and reader-centered writing style will make your reports more effective.

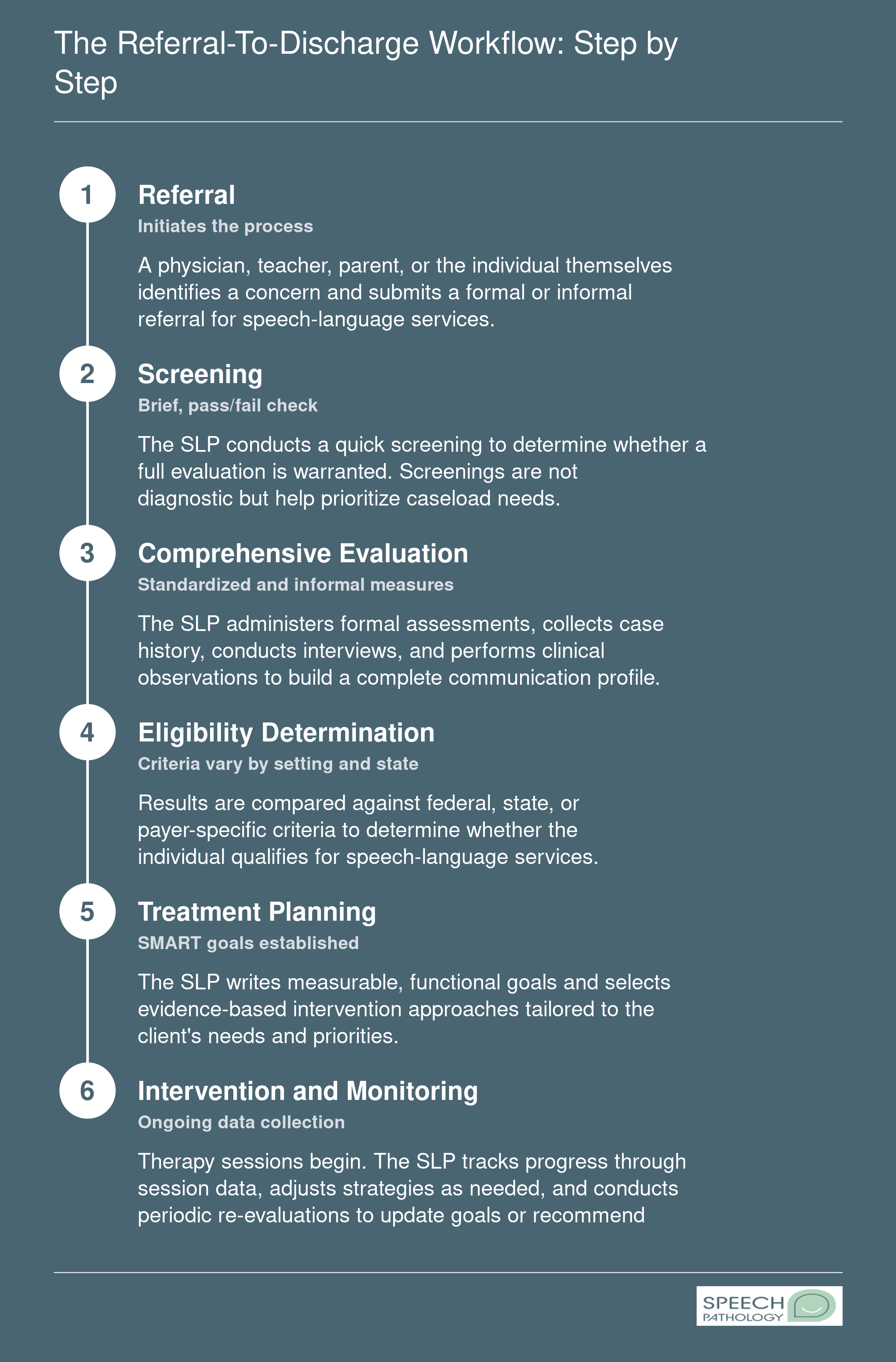

Section-by-Section Report Template

Most SLP evaluation reports follow a predictable framework. Organizing yours around these sections keeps information easy to locate for every reader, from parents to insurance reviewers.

- Identifying information: Client name, date of birth, date of evaluation, referral source, and the clinician's credentials.

- Reason for referral: A concise statement explaining why the evaluation was requested and by whom.

- Background and history: Relevant developmental, medical, educational, and social history gathered from caregiver interviews, medical records, and prior reports.

- Assessment procedures: A list of all formal tests, informal measures, observations, and language samples administered during the evaluation.

- Results by domain: Findings organized by communication area (e.g., articulation, receptive language, expressive language, fluency, voice, pragmatics, feeding and swallowing) with both quantitative data and qualitative descriptions.

- Clinical impressions: A synthesis of all results into a cohesive profile, including severity ratings and functional impact statements.

- Recommendations: Specific therapy recommendations, referrals, frequency and duration of services, and any accommodations or home strategies.

This structure works across settings, whether you practice in schools, hospitals, private clinics, or early intervention programs.

Write for the Reader, Not the Clinician

Parents, teachers, and case managers are among the most frequent readers of your reports, and none of them need a paragraph copied from a test manual explaining what the Goldman-Fristoe measures. Instead, translate scores into functional language. Rather than writing "Client scored a standard score of 72 on the CELF-5 Receptive Language Index," consider something like: "Her receptive language skills fall well below expectations for her age. In practical terms, she is likely to have difficulty following multi-step classroom directions and understanding grade-level reading passages."

Every score you report should be paired with a plain-language explanation of what it means for the client's daily communication. This approach respects the reader's time and builds trust with families who may feel overwhelmed by clinical terminology.

Documenting Severity, Functional Impact, and Recommendations

Severity ratings and functional impact statements are critical for two audiences: school teams determining eligibility and insurance reviewers authorizing services. Use consistent descriptors (mild, moderate, severe, profound) and tie them directly to observable limitations. For example, stating that a client's severe expressive language disorder results in frequent communication breakdowns during peer interactions and limits participation in classroom discussions gives decision-makers the context they need.

Your recommendations section should be specific enough to justify the services you are requesting. Instead of writing "speech therapy is recommended," specify the frequency (e.g., two 30-minute sessions per week), the targeted domains, and the service delivery model. This level of detail supports IEP eligibility arguments and satisfies the medical necessity criteria that most insurance payers require. Understanding the full SLP scope of practice can also help you articulate which domains fall within your professional authority when crafting recommendations.

Avoiding Common Report-Writing Pitfalls

Even experienced clinicians fall into patterns that weaken their reports. Watch for these common missteps:

- Omitting informal data such as language sample analysis, play-based observations, or caregiver-reported concerns. Standardized scores alone rarely capture the full picture, and informal data often provides the strongest evidence of functional impact.

- Copy-pasting test descriptions without interpretation. Listing subtest scores in a table is not the same as analyzing what those scores mean together. Readers need you to connect the dots.

- Failing to link results to functional communication needs. A report that ends with scores but never explains how those scores affect the client's ability to participate in school, work, or social life misses the point of the evaluation entirely.

- Using vague or boilerplate recommendation language that could apply to any client. Individualized, specific recommendations demonstrate clinical reasoning and strengthen your case for services.

Think of the evaluation report as the foundation for everything that follows: treatment goals, progress monitoring, re-evaluation decisions, and discharge planning. Selecting the right slp assessment tools at the outset ensures you have the data to build a thorough, reader-friendly document that saves time down the road and leads to better outcomes for the clients you serve.